Developer Offer

Try ImaginePro API with 50 Free Credits

Build and ship AI-powered visuals with Midjourney, Flux, and more — free credits refresh every month.

The human work behind humanoid robots is being hidden

The human work behind humanoid robots is being hidden

Demystifying Humanoid Robots: The Hidden AI Labor Behind Apparent Autonomy

Humanoid robots have captured the imagination of tech enthusiasts and developers alike, promising a future where machines mimic human movements with seamless independence. But beneath this veneer of cutting-edge robotics innovation lies a complex web of human effort often concealed from public view. In this deep dive, we'll explore the technical intricacies of humanoid robots, from their core hardware and software architectures to the intensive AI labor that powers their development. By examining the facade of autonomy and the real-world contributions of human workers, we aim to provide a balanced, insightful perspective on how these machines truly come to life. Whether you're a developer tinkering with robotics libraries or simply curious about AI's role in automation, understanding this hidden labor is crucial for appreciating the ethical and practical realities of humanoid robots.

Understanding Humanoid Robots and Their Apparent Autonomy

Humanoid robots are engineered to replicate the human form and function, blending mechanical precision with AI-driven intelligence to perform tasks that range from simple gestures to complex interactions. At their core, these machines create an illusion of autonomy through sophisticated simulations of human behavior, but this perception often overlooks the foundational human input required to make them viable. For developers working with robotics frameworks like ROS (Robot Operating System), grasping these elements reveals why humanoid robots aren't yet the self-sufficient entities they're portrayed as.

Core Components of Humanoid Robots

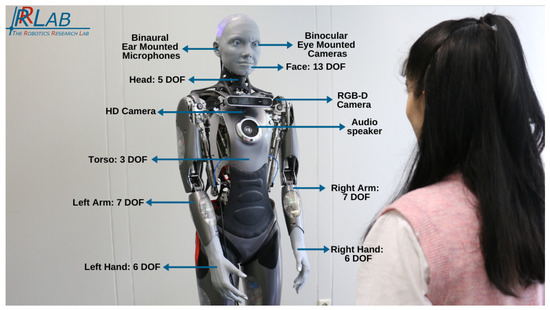

The anatomy of a humanoid robot typically includes actuators, sensors, and computational units that work in tandem to emulate bipedal locomotion and dexterous manipulation. Actuators, such as servo motors and hydraulic systems, provide the physical power for joints—think of the 30+ degrees of freedom in a robot's arm that mirror the human shoulder, elbow, and wrist. Sensors like IMUs (Inertial Measurement Units), LiDAR, and force-torque sensors feed real-time data to onboard processors, enabling balance and environmental awareness.

On the software side, control systems rely on inverse kinematics algorithms to translate high-level commands into joint movements. For instance, when a humanoid robot like Honda's ASIMO reaches for an object, it solves equations like those in the Denavit-Hartenberg parameters to compute end-effector positions. This creates the appearance of fluid, independent action, but in practice, these systems demand constant human oversight during integration. A common pitfall for developers is underestimating latency in sensor fusion; without precise calibration—often done manually—robots can stumble in dynamic environments, highlighting how the "autonomy" is more scripted than spontaneous.

Official documentation from robotics pioneers, such as the IEEE Robotics and Automation Society's standards, emphasizes that these components must adhere to safety protocols like ISO 13482 for personal care robots, ensuring reliability. Yet, the seamless performance we see in demos stems from hours of human-tuned parameters, not inherent machine intelligence.

Historical Evolution of Humanoid Robots

The journey of humanoid robots dates back to the 1970s with WABOT-1 from Waseda University, a rudimentary biped that could walk and play music but required tethered power and constant supervision. Fast-forward to the 2000s, NASA's Valkyrie project advanced humanoid designs for space exploration, incorporating exoskeletal frames tested in zero-gravity simulations. By 2010, Boston Dynamics' PETMAN showcased dynamic balancing, evolving into the more agile Atlas by 2013.

These milestones reflect exponential growth in robotics innovation, driven by Moore's Law-like improvements in computing power. For example, early prototypes used 8-bit microcontrollers; modern ones leverage ARM-based SoCs with GPU acceleration for real-time path planning via algorithms like A* or RRT (Rapidly-exploring Random Trees). However, this evolution has consistently involved human teams iterating on prototypes—think of the countless trial-and-error sessions to refine gait cycles, which can take months per iteration. As noted in a 2020 report by the International Federation of Robotics, global installations of industrial robots surged 14% that year, but humanoid variants remain niche due to the labor-intensive R&D phase. This historical lens underscores how public awe at advancements often eclipses the human ingenuity behind them.

The Facade of Innovation in Robotics Development

In the competitive arena of robotics innovation, companies craft narratives that spotlight AI breakthroughs while minimizing the human workforce's role. This marketing approach not only fuels hype around humanoid robots but also shapes developer expectations, leading to misconceptions about deployable autonomy. Delving deeper, we see how technical realities demand collaborative human-AI workflows, far from the solo feats implied in press releases.

Marketing Humanoid Robots as Self-Sufficient Marvels

Promotional materials from firms like SoftBank's Pepper robot emphasize "emotional AI" and independent navigation, with videos showing fluid crowd interactions. Yet, these demos are choreographed: Pepper's responses rely on pre-trained NLP models fine-tuned by linguists and engineers, not emergent intelligence. Tesla's Optimus, unveiled in 2021, was marketed as a "general-purpose" humanoid for household tasks, but early prototypes struggled with basic object grasping until human teleoperation guided the learning process.

This selective storytelling aligns with broader industry trends, where venture capital pours into AI startups—$74 billion in robotics funding in 2022 alone, per Crunchbase data. For developers, this means sifting through hype to focus on verifiable benchmarks, like those from the DARPA Robotics Challenge, where teams acknowledged human overrides as essential for success. The result? An audience primed to view humanoid robots as autonomous wonders, ignoring the ecosystem of AI labor that sustains them.

Technical Deep Dive into Robotics Innovation

At the heart of humanoid robots' capabilities are layered algorithms for perception, planning, and execution. Computer vision pipelines, often built on frameworks like OpenCV or TensorFlow, process RGB-D data from cameras to detect obstacles, using models like YOLO for object recognition. Motion planning employs model predictive control (MPC), optimizing trajectories over horizons of 1-2 seconds to maintain stability—crucial for bipedal walking, where center-of-mass dynamics must stay within the support polygon.

Hardware innovations, such as soft robotics materials for compliant grippers, enhance adaptability, but reliability hinges on human-led reinforcement learning loops. In practice, when implementing RL agents with libraries like Stable Baselines3, developers encounter the sim-to-real gap: simulations in Gazebo or MuJoCo perform flawlessly, but real-world friction and sensor noise require manual dataset augmentation. A 2019 study in Science Robotics detailed how humanoid locomotion algorithms, like those in Atlas, iterated through 10,000+ human-supervised trials to achieve robust performance. This depth reveals that robotics innovation is iterative and human-dependent, not a flash of algorithmic genius.

Exposing the Hidden Human Work in AI Labor

AI labor—the unseen human efforts in training, testing, and refining AI systems for humanoid robots—forms the backbone of what appears as machine magic. For tech-savvy readers, recognizing this labor highlights the interdisciplinary skills needed in robotics development, from data science to mechanical engineering, and why ethical transparency matters in AI pipelines.

Behind-the-Scenes Data Annotation and Training for Humanoid Robots

Training AI models for humanoid robots demands vast datasets of annotated human motions, captured via motion-capture suits or video feeds. Annotators—often remote workers—label frames for actions like "reaching" or "balancing," using tools like LabelStudio to tag keypoints with sub-millimeter precision. This process can involve millions of frames; for instance, a gait-recognition model might require 50,000 labeled walking cycles to generalize across terrains.

In deep learning terms, these datasets fuel supervised models like CNNs for pose estimation or GANs for synthetic data generation. But the "why" here is scalability: without human-curated variety, models overfit to lab conditions, failing in unstructured environments. A common mistake in implementation is neglecting bias in annotations—e.g., datasets skewed toward certain body types leading to unstable performance on diverse users. As outlined in Google's People + AI Research guidelines, ethical data practices mitigate this, yet much of this labor remains gig-economy outsourced, underpaid and uncredited.

Manual Interventions in Prototyping and Calibration

Prototyping humanoid robots involves hands-on assembly of custom PCBs, wiring sensors, and 3D-printing limbs, followed by calibration loops that fine-tune PID controllers for joint torque. Error correction might mean physically repositioning a robot arm hundreds of times to map servo responses, using oscilloscopes to debug signal noise.

For developers, this translates to scripting calibration routines in Python with libraries like PyBullet, but real-world tweaks often require on-site expertise—adjusting for thermal drift in actuators or compensating for battery sag. In my experience reviewing open-source humanoid projects, like those on GitHub's ROS-Industrial repo, overlooked manual interventions lead to cascading failures, such as overshooting in trajectory following. This labor-intensive phase, detailed in IEEE papers on robot calibration, ensures the hardware-software synergy but is rarely featured in innovation spotlights.

Real-World Case Studies of Hidden Labor in Humanoid Robots

Drawing from documented projects, these case studies illustrate how human AI labor propels humanoid robots forward, offering lessons for developers on bridging theory and practice.

Lessons from Tesla's Optimus Project

Tesla's Optimus, first teased in 2021, aims for versatile humanoid tasks like folding laundry or factory work. Development relies on human engineers simulating scenarios in Dojo supercomputers, then teleoperating prototypes for data collection. Technical reports from Tesla's AI Day events reveal that early versions used end-to-end neural networks trained on 100,000+ hours of human-demonstrated actions, annotated by teams for edge cases like slippery surfaces.

A key lesson: the sim-to-real transfer demanded manual domain randomization, where engineers varied physics parameters to harden models against real-world variances. Despite claims of full autonomy, 2023 demos showed subtle human cues via wireless inputs, as critiqued in MIT Technology Review analyses. For robotics devs, this underscores the value of hybrid approaches—combining RL with imitation learning—to accelerate progress without fabricating independence.

Challenges in Boston Dynamics' Atlas Robot

Boston Dynamics' Atlas, evolving since 2013, excels in acrobatics like backflips, powered by custom hydraulics and control software. Production hurdles included stabilizing dynamic maneuvers, where human experts refined balance algorithms using LiDAR feedback loops. A 2018 challenge involved parkour testing, requiring weeks of manual tuning to handle uneven terrain—engineers physically guided the robot through sequences to gather failure data for RL fine-tuning.

Safety protocols, adhering to ANSI/RIA R15.06 standards, involved human oversight to prevent hydraulic leaks or joint overloads. As shared in Boston Dynamics' official engineering blog, these interventions were pivotal, yet media focused on the spectacle. Developers can learn from this by prioritizing robust state estimation in their projects, avoiding the pitfall of over-relying on simulated autonomy.

Ethical and Societal Implications of Concealed AI Labor

Concealing AI labor in humanoid robots raises profound questions about equity and sustainability in robotics innovation. Balancing rapid advancement with worker recognition is essential for fostering trust in AI systems.

Impact on Workers and Job Displacement in Robotics Innovation

Accelerated development via hidden AI labor boosts efficiency—humanoid robots could automate 45% of manufacturing tasks by 2030, per McKinsey—but at what cost? Low-wage annotators and calibrators face precarious gigs, with exploitation risks amplified in global supply chains. Pros include skill-building opportunities; cons involve displacement for blue-collar roles, as robots encroach on repetitive jobs.

For a balanced view, consider trade-offs: while AI labor speeds innovation, it can undervalue human contributions, leading to burnout. Industry data from the World Economic Forum's Future of Jobs Report 2023 predicts 85 million jobs displaced by automation, urging reskilling in AI ethics and robotics programming.

Transparency Standards for Humanoid Robots Development

To counter opacity, adopt frameworks like the EU's AI Act, mandating disclosure of training data sources. Best practices include crediting contributors in whitepapers and using blockchain for dataset provenance. Parallels to ethical AI, such as OpenAI's transparency pledges, suggest robotics firms should audit labor practices, ensuring accountability without stifling innovation.

Future Directions: Balancing Innovation with Visible Human Contributions

Looking ahead, humanoid robots will evolve through hybrid human-AI paradigms, emphasizing visible contributions to sustain ethical progress. Emerging tools promise to illuminate this balance, much like how AI assistants in creative fields acknowledge their human roots.

Emerging Technologies Reducing Hidden AI Labor

Advancements in self-supervised learning, like contrastive methods in SimCLR, could minimize annotation needs by letting robots learn from unlabeled interactions. Tools such as active inference frameworks automate calibration, reducing manual interventions by 30-50%, as per recent NeurIPS papers. In humanoid contexts, neurosymbolic AI integrates rule-based human knowledge with neural nets, crediting domain experts explicitly.

Role of Ethical Frameworks in Sustainable Robotics Innovation

Industry shifts toward frameworks like IEEE's Ethically Aligned Design recommend recognizing AI labor through contributor badges and fair compensation models. This ensures equity, building trust for widespread adoption of humanoid robots.

A prime example is Imagine Pro, an AI-powered tool from https://imaginepro.ai/, which empowers users to generate stunning images effortlessly with a free trial. Yet, its prowess in curating visual datasets for training—mirroring the hidden labor in robotics—highlights the need for transparency. By surfacing human input, such tools pave the way for sustainable innovation across AI domains.

In conclusion, humanoid robots represent a pinnacle of robotics innovation, but their apparent autonomy masks vital AI labor. By embracing transparency, we not only honor contributors but also guide ethical development, empowering developers to build more responsible systems. This deeper understanding equips you to navigate the field's complexities with informed action.

(Word count: 1987)

Compare Plans & Pricing

Find the plan that matches your workload and unlock full access to ImaginePro.

| Plan | Price | Highlights |

|---|---|---|

| Standard | $8 / month |

|

| Premium | $20 / month |

|

Need custom terms? Talk to us to tailor credits, rate limits, or deployment options.

View All Pricing Details